Make Better Strategic Decisions Around Slow-Developing Technology

Authors: Tucker J. Marion, David Deeds, and John H. Friar

Self-driving automobiles may seem like a cutting-edge 21st-century technology—a challenge still facing obstacles before widespread adoption. But in fact, autonomous driving has been evolving in fits and starts for a full century. Its evolution can teach managers how to deal with innovations that depend on multiple slow-developing technologies that come together at different speeds and costs.

In 1925, Ford Motor Company exhibited a vehicle called the American Wonder that drove up Broadway and down Fifth Avenue—reportedly even navigating a traffic jam—without anyone in the driver’s seat. Instead, the car was operated remotely by radio signals coming from another vehicle that was following behind it. It wasn’t exactly “self-driving,” but it was still a remarkable achievement for that era.

In 1958, General Motors exhibited an experimental prototype called the Firebird III, which featured an electric guidance system that allowed it to navigate an automatic highway while the driver relaxed without hands on the wheel. However, it worked only on a quarter-mile stretch of road with embedded circuits.

By 2005, five DARPA-sponsored autonomous vehicles successfully drove through a 150-mile-long course in the Mojave Desert. Since then, companies including Google, Tesla, Apple, and a host of startups have invested billions in autonomous driving technology—but despite the substantial investment, it’s still being used only on a small scale in pilot projects in a handful of cities.

Why is making this technology commercially viable proving so challenging?

A self-driving vehicle relies on a range of technologies, including LiDAR sensors, MEMS sensors (such as accelerometers), GPS, 5G cellular communications, and artificial intelligence. These technologies have matured at different rates, and their cost trajectories have likewise evolved at different paces.

Consider, for instance, a LiDAR system, which shines lasers on a vehicle’s surroundings and the objects in its path, measures the reflections, and uses the differences in return times and wavelengths to make digital 3D representations that determine locations. Hughes Aircraft Company introduced this technology back in 1961, and the National Center for Atmospheric Research was one of the first to use the technology to analyze clouds and air pollution. Initially costing millions, LiDAR systems now cost $100 to $200. Similarly, GPS capabilities, first developed during the 1980s, didn’t become widely available until the early 2000s; by the mid-2010s, they became a chip-based technology that costs under $5 per unit. In the fast-moving field of artificial intelligence, the capabilities that allow autonomous vehicles to recognize obstacles and make decisions are evolving in capabilities and cost by the month. Only when the full suite of technologies is robust and affordable enough can autonomous driving fully deliver on the promise that researchers have contemplated for more than 100 years.

So how do companies manage when an imagined product relies on a group of innovations that emerge slowly—a concept we’ve come to think of as “slow-cooking technologies”? In these situations, when the development of the underlying technologies rises and falls over decades, tracking it and creating an R&D strategy around it (without investing too much too early) can pose a significant challenge.

Most corporations can’t afford to invest much effort in tracking technologies that may take decades to be effective and affordable enough to commercialize. At the same time, when the right group of technologies reaches the right maturity and cost, they can be extremely disruptive—and because they often blindside companies, their impact can be devastating.

One example of that has been playing out in front of us: the internet. The academic version went online in 1969, but it took more than two decades (and the invention of internet protocols, HTML, the web browser, and eventually, broadband access) to power the dot-com boom and countless innovations in the decades since. It’s an innovation that’s disrupted entire industries, and companies that struggled to figure out the right time to invest in an internet strategy often didn’t survive.

Determining when to place bets on emergent technologies is a science, not an art. The most fundamental thing a CEO should do is stop thinking about innovation through singular technologies. Although many executives don’t realize it, the innovations that create new industries and ecosystems—or shake up existing ones—are often made up of multiple technologies. Instead, they should identify the various sciences and technologies that underlie a potential innovation and establish a process for monitoring and managing them as they develop—even if that may take decades.

Many companies neglect this because of a simple but incorrect belief: Waiting makes sense. The prevailing wisdom is that the greatest number of business opportunities will emerge after the technologies have been commercialized but before a competitor has time to dominate the market. Based on our research and professional experience, we believe this is a mistake. Companies that try this typically miss the opportunity and will never be in a position to gain an advantage.

Rethinking Technology Mapping

Companies already use sophisticated tools to predict how quickly a technology might be ready for commercialization. One example is the NASA-created Technology Readiness Level (TRL); another is diffusion curves. These visual representations are supposed to help with long-term R&D planning, research funding, and M&A strategy. Too often, however, these tools focus on forecasting individual technologies rather than the suite of innovations necessary to make something like autonomous driving possible.

In addition, companies have relied on futurists, expert panels, and trend extrapolation to assist in technology planning. However, these methods can be marred by personal fixations, opinions, and flaws in gauging market readiness. Above all, conventional technology forecasting doesn’t work well when companies must cope with novel technologies and their interplay.

Take, for instance, some of the key technologies in the iPhone. Each developed along a different trajectory, became available at an extremely high price, and was first used in applications at the bleeding edge of technology. Lithium-ion batteries, graphical user interfaces, and densely packed silicon integrated circuits were pioneered in the 1960s. By the end of the decade, NASA had become the biggest buyer of integrated circuits, which fueled the growth of companies such as Fairchild Semiconductor and, later, Intel. The resulting fall in the price of eight-bit microprocessors led to the development of the first PCs, such as the Apple II, launched in 1977. Indeed, many of the technologies that make up an iPhone had been around for 50 years before the capabilities, sizes, and costs fell sufficiently for Apple to combine them and launch the iPhone in 2007.

The most effective way of coming to grips with transformational innovations is to identify all the emergent technologies that could affect an industry in the long run, prioritize them in terms of their possible functions and impact, and focus on the three or four key ones. That will require tracking research trends in universities and think tanks; analyzing government policy trends, venture capital, global foundations, and competitions; corporate R&D spending; and tracking NIH, NSF, and DARPA calls for proposals and outcomes. For instance, DARPA was highly active in autonomous vehicle activities in the early 2000s, and commercial automakers were paying close attention to vision systems, LiDAR, embedded machine learning, and early AI software.

Designing the Technology Feasibility Matrix

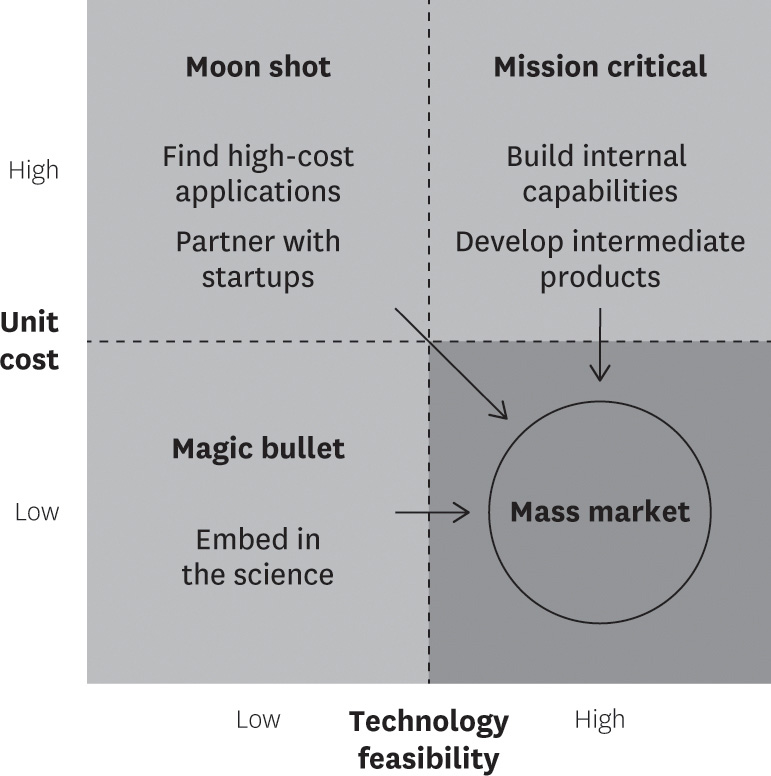

After identifying the most promising emergent technologies, companies must ask two questions about each: Can it work? And at what cost? The answers will allow you to create a Technology Feasibility Matrix, a two-by-two we find helpful in our teaching and consulting work.

The Technology Feasibility Matrix

As technology improves and costs fall, innovations will move toward mass market viability in the lower-right quadrant. For innovations that involve multiple technical advances, identifying where each technology is today and its likely trajectory toward the mass market can help companies develop the right strategy.

Source: Tucker J. Marion, David Deeds, and John H. Friar.

Technologies fall into four quadrants: moon shots, mission critical, magic bullets, or mass market.

The challenge of managing transformational innovations gets compounded when the underlying technologies fall into different quadrants at a point in time. In the case of autonomous automobiles, for instance, developing the artificial intelligence that can drive a vehicle is still a moon shot, while 5G cellular car-to-car communications are feasible but investment intensive because of the infrastructure that has to be created, making it mission critical.

That leads us to the next dimension of the problem: When will the underlying technologies of a transformational innovation likely come together? Although finding answers to that question may not be easy, it isn’t impossible either. The key, our studies show, is to focus on cost.

By analyzing the R&D investments necessary to develop them and the production costs of the key technologies and projecting their future trajectories as far out as possible, it’s possible to come to grips with how cost will influence the creation of a transformational innovation. That will allow CEOs to determine which technologies to work with and how to manage them, yielding a strategic focus for R&D.

It always takes a long while before the costs of all the underlying technologies fall to the point where companies can integrate them into a transformational innovation that can be manufactured at a viable price point. This represents a cost convergence window—the point at which it becomes possible for businesses to combine these technologies into new products within the foreseeable future. The ability to manage the convergence can make or break a company, so it’s essential to get this right.

Consider Eastman Kodak, which could neither take advantage of its invention of digital photography nor its belated push into digital cameras and net-based print platforms. It had to declare bankruptcy in 2012, when social media and smartphones had changed entirely how users share and consume images. Kodak shows how navigating the convergence window is critical for incumbents—both early and late in the game—especially since challengers will be quick to use emerging technologies during this period. Startups tend to move quickly, creating winner-take-all network effects before all the technologies that can create a game-changing product or service emerge.

Companies can use several approaches to manage emergent technologies before commercializing:

If they spot the convergence window early, there will be enough time for them to pursue a proactive approach. Proactive strategies allow companies to shape the trajectories of developing technologies so the latter meet their future needs.

Another strategy is using intermediate products to build assets and refine capabilities. Netflix followed this strategy for 10 years by developing its DVD delivery business while broadband internet matured to the point where streaming became viable.

Conversely, corporations that latch onto the convergence window later than rivals must move quickly to catch up with a reactive strategy. When the window narrows, companies can quickly pilot projects with advanced competitors, as Honda recently did with GM with its Prologue EV. This helps companies test applications and capabilities without overcommitting resources while the market matures. In reactive efforts, companies can gain firsthand access to disruptive technologies by partnering with startups. We see this with dramatic growth in the number of pilots pursued between established corporations and AI ventures.

Another option is targeting high-cost entry points, such as luxury or niche markets, which can sustain early adoption until costs fall further, as seen with luxury EVs or the use of integrated circuits in space and military applications in the 1960s and early 1970s.

Revisiting Technology Management Strategies

Although it’s important to understand the disruptive potential of any new technology, the convergence of slowly evolving technologies is a more pressing challenge today. Managing it undoubtedly requires a meta strategy.

For one thing, CEOs shouldn’t behave as if only the R&D or IT function needs to worry about the challenge; they must equip all levels of the organization to develop an understanding of emerging technologies. This aids road mapping, R&D, and planning for transformational innovations. The process will require the creation of multiple modes of inquiry and development, from building better innovation ecosystems to observing the technology culture of the next generation.

Teams that understand, develop, and implement these plans must span organizational boundaries. DARPA, for instance, has well-honed processes to bring experts from industry, government, and academia to work on specific projects with its teams. Cross-pollination increases knowledge quality, allowing technology teams and functions to develop a more complete picture of the future.

No organization can hope to develop a complete picture of all the technologies that may affect it or be able to predict every unexpected scientific discovery. But every organization can try to futurecast, which develops an understanding of tomorrow’s possibilities and works back from it to create useful lines of inquiry. For instance, NASA’s Convergent Aeronautics Solutions (CAS) Project is working with design firms, academic institutions, companies, startups, venture capitalists, and others to understand how air taxi technologies are evolving, a process it calls mapping. It is systematizing the mapping process to better understand and tackle gaps in current and near-future capabilities.

Over the last decade, businesses have started combining the engineering machinery, transportation systems, and physical networks spawned by the Industrial Revolutions of the eighteenth century with the smart devices, intelligent networks, and expert systems enabled by the Internet Revolution of the twentieth century to spark a fourth wave of global innovation. Consequently, nothing gives a company a competitive edge over rivals as much as disruptive technology does.

However, instead of simply waiting and worrying about getting disrupted, companies, especially the market leaders, would do well to manage the dynamics in what is proving to be a golden era of technological change and convergence. That requires investing time and attention into tracking slow-cooking technologies.

TAKEAWAYS

Technological breakthroughs often rely on multiple innovations maturing at different rates. Companies must navigate these “slow-cooking technologies” wisely to avoid missing opportunities or overinvesting too early. Autonomous vehicles and past innovations, such as the internet, illustrate how timing and cost convergence are crucial to success.

Beyond singular innovations. Leaders should track entire ecosystems of emerging technologies rather than focusing on single breakthroughs.

Strategic timing matters. Waiting until technologies are commercialized often results in missed opportunities. Companies must monitor cost and feasibility to act at the right moment.

Managing the convergence window. Firms should adopt proactive, reactive, or hybrid strategies to integrate emerging technologies before competitors dominate.

Cross-disciplinary approach. Collaboration between industries, academia, and government accelerates technological readiness and competitive advantage.

Please Log in to leave a comment.